The Evolving Landscape of Online Child Safety in 2026

The digital world of 2026 is both a marvel and a minefield for children. With nearly universal smartphone access among teens in developed economies and rapidly expanding connectivity in emerging markets, children are engaging with digital platforms earlier and more frequently than ever before. While the internet offers unprecedented opportunities for learning, creativity, and socialization, it also exposes young users to a complex array of risks - many of which have intensified or evolved due to advances in artificial intelligence, the proliferation of new platforms, and the global reach of online communities.

Recent years have witnessed a surge in online harms targeting minors, from cyberbullying and grooming to privacy breaches and exposure to harmful or manipulative content. The emergence of AI-generated threats, such as deepfakes and synthetic sexual abuse material, has further complicated the challenge of keeping children safe online. Meanwhile, regulatory frameworks are racing to keep pace with technological innovation, and industry responses vary widely in effectiveness and transparency.

This comprehensive analysis examines the biggest online dangers for children in 2026, drawing on the latest data, expert commentary, and case studies. It also provides practical guidance for parents, educators, policymakers, and industry leaders committed to building a safer digital future for young users.

1. The Major Online Dangers Facing Children in 2026

Children’s online risks can be categorized into several broad domains, each with unique characteristics and evolving threats:

- Cyberbullying and Online Abuse: Persistent harassment, humiliation, and exclusion via digital platforms.

- Online Predators and Grooming Networks: Sophisticated efforts to manipulate, exploit, or coerce children, often across multiple platforms.

- AI-Generated Threats: Deepfakes, synthetic sexual abuse material, and algorithmically amplified manipulation.

- Algorithmic Manipulation and Addictive Design: Features engineered to maximize engagement, sometimes at the expense of wellbeing.

- Privacy Breaches and Data Exploitation: Commercial and criminal misuse of children’s personal information.

- Exposure to Harmful Content: Including pornography, violence, self-harm, disinformation, and radicalization.

- Platform-Specific Risks: Unique dangers associated with popular platforms such as Roblox, TikTok, and Discord.

- Emerging and Cross-Cutting Risks: Including quantum computing, extended reality, and the intersection of online and offline vulnerabilities.

These risks are not mutually exclusive and often intersect, compounding their impact on children’s safety, privacy, and development.

2. Cyberbullying: Prevalence, Platforms, and Impacts in 2026

2.1. The Scope and Scale of Cyberbullying

Cyberbullying remains one of the most pervasive online dangers for children. In 2026, it is estimated that 21% of children aged 10–18 have experienced cyberbullying, with prevalence increasing with age and among certain demographic groups. The most common platforms for cyberbullying include YouTube (79% of cases), Snapchat (69%), TikTok (64%), and Facebook (49%).

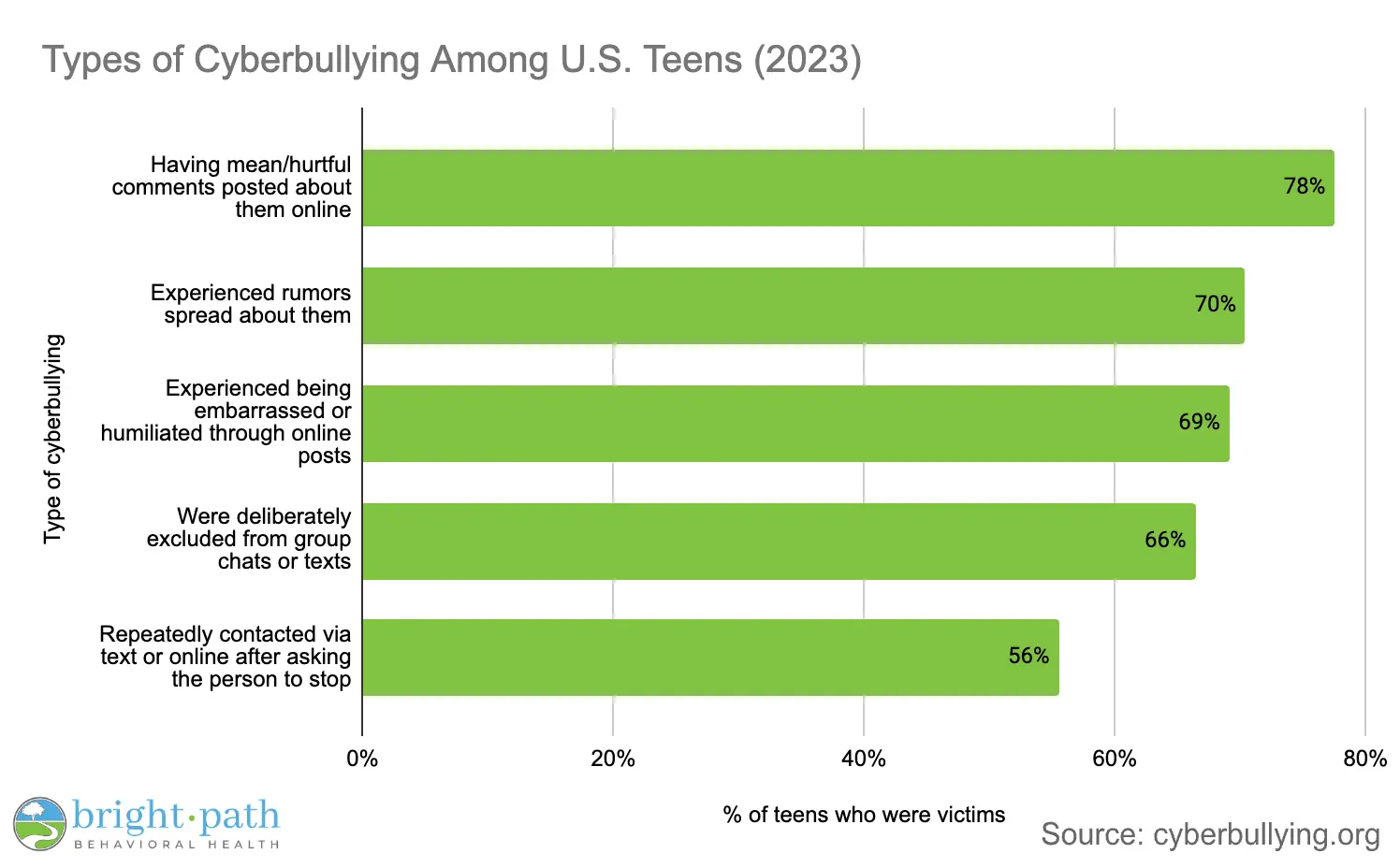

Cyberbullying manifests in various forms:

- Name-calling, harassment, and exclusion.

- The spread of rumors or embarrassing content.

- Doxing (publishing private information).

- The viral sharing of humiliating images or videos.

2.2. Psychological and Social Impacts

The consequences of cyberbullying are profound and enduring. Victims report feelings of anger, insecurity, and low self-worth. Two-thirds of cyberbullying victims say it negatively affects their self-esteem, and nearly a third report impacts on friendships and social life. Long-term effects include anxiety, depression, withdrawal from school or activities, and in severe cases, self-harm or suicidal ideation.

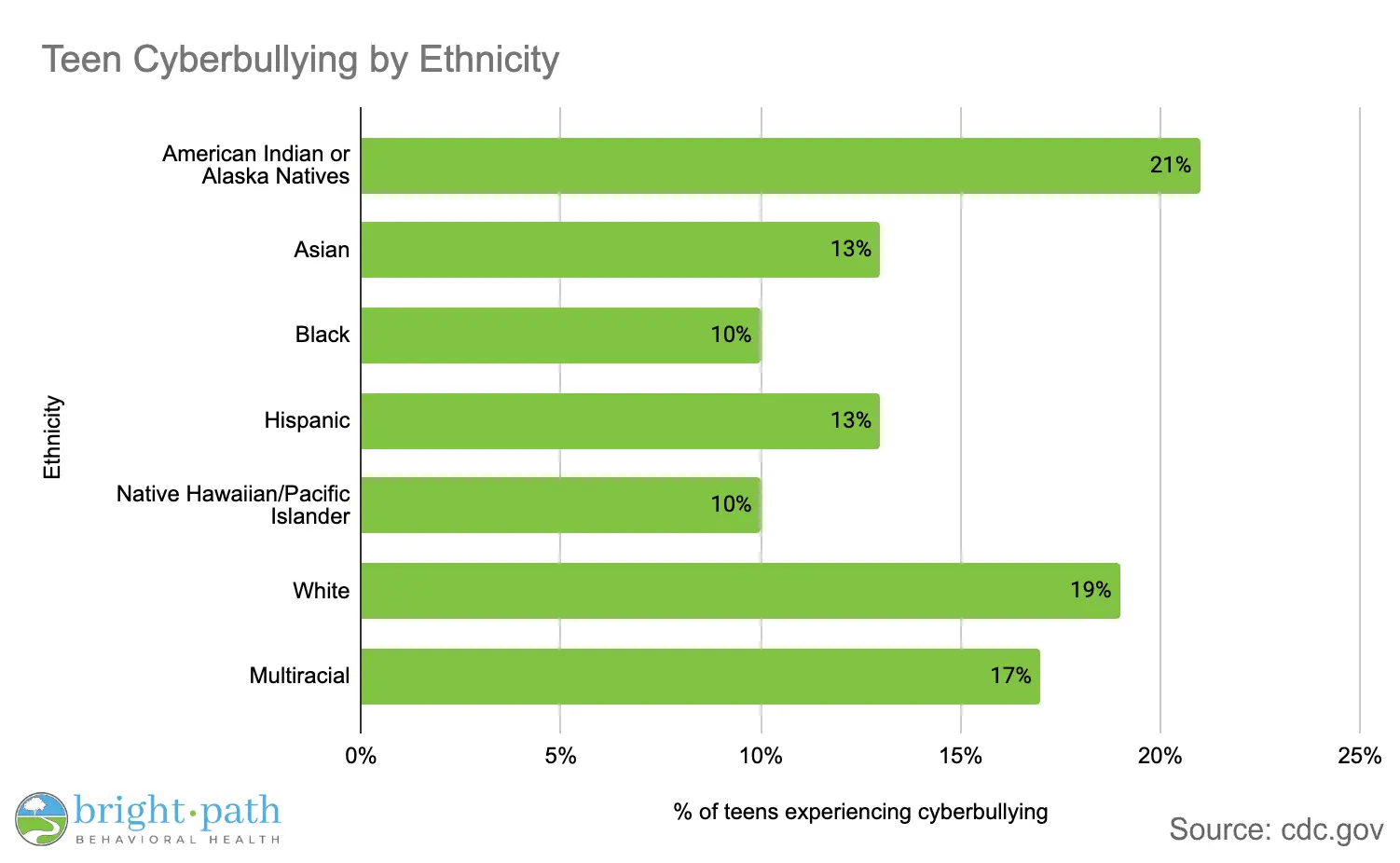

2.3. Demographic and Equity Considerations

Children from lower-income households are twice as likely to be cyberbullied as those from higher-income families (22% vs. 11%). Girls, LGBTQ+ youth, and children with disabilities are at heightened risk, often facing gendered or identity-based harassment.

2.4. Platform and Industry Responses

While most major platforms have implemented reporting and blocking tools, enforcement remains inconsistent. Many teens feel that social media companies and policymakers are not doing enough to address cyberbullying, and calls for stronger regulation and industry accountability are growing.

3. Online Predators and Grooming Networks: The Rise of ‘The Com’ and Beyond

3.1. The New Face of Online Grooming

Online grooming has become more sophisticated and organized, with networks such as “The Com” (short for “The Community”) using coordinated tactics to exploit children as young as eight. These groups operate across platforms including Discord, Telegram, Snapchat, Roblox, Minecraft, Twitch, and Steam.

Key grooming tactics include:

- Building trust through friendship or romantic attention.

- Manipulation via fear, guilt, or intimidation.

- Coercion into sharing exploitative images or videos.

- Pressuring children to engage in self-harm, criminal activity (e.g., swatting), or the spread of harmful content.

3.2. Prevalence and Detection

Despite the scale of the problem, only 12% of online grooming cases are reported to authorities, with most victims citing fear, shame, or lack of knowledge about how to report. Law enforcement detects 45% of cases, while parents and peers account for 30% and 22%, respectively. Third-party apps and platform monitoring are increasingly important in detection.

3.3. Psychological and Developmental Impacts

The trauma of online grooming is severe and long-lasting:

- 82% of victims experience anxiety or depression within six months.

- 43% develop PTSD, with symptoms persisting for years.

- 49% report suicidal ideation; 12% attempt suicide.

- 84% engage in self-harm; 29% report severe self-harm.

- 71% withdraw from school or social activities.

- 67% struggle with trust in relationships, even years later.

These impacts underscore the urgent need for early intervention, support services, and trauma-informed care.

3.4. Platform-Specific Risks and Notable Incidents

Platforms with chat or group features, such as Roblox and Discord, are frequently exploited by predators. Recent lawsuits and investigations allege that Roblox failed to implement adequate safeguards, allowing predators to access and exploit tens of millions of children. In 2025, over 100 lawsuits were consolidated against Roblox, with allegations of sexual exploitation, grooming, and inadequate age verification.

4. AI-Generated Threats: Deepfakes, Sexual Extortion, and Synthetic Media

4.1. The Explosion of AI-Generated Child Sexual Abuse Material (CSAM)

The rapid advancement of generative AI has fueled an unprecedented surge in synthetic child sexual abuse material. Reports of AI-generated CSAM to the National Center for Missing & Exploited Children (NCMEC) rose by 1,325% between 2023 and 2024, with over 440,000 reports in the first half of 2025 alone. Globally, the CyberTipline received 20.5 million reports of suspected child sexual exploitation in 2024, with AI-related cases surging by 6,344% year-over-year.

A UNICEF-led study found that at least 1.2 million children had their images manipulated into sexually explicit deepfakes in the past year, representing one in 25 children in some countries.

4.2. Deepfakes and ‘Nudification’ Tools

Deepfakes—AI-generated images, videos, or audio designed to look real—are increasingly used to produce sexualized content involving children. “Nudification” tools can strip or alter clothing in photos to create fabricated nude images, often used for sexual extortion or bullying.

4.3. Psychological and Social Impacts

Victims of AI-generated abuse experience direct victimization, even when the images are synthetic. The harm includes:

- Severe emotional distress, anxiety, and depression.

- Social isolation and withdrawal.

- Stigmatization and reputational damage.

- Increased risk of self-harm and suicide.

Children are acutely aware of these risks; up to two-thirds express concern about AI being used to create fake sexual images or videos.

4.4. Detection and Prevention Technologies

The arms race between deepfake creation and detection is intensifying. Leading detection tools now use multimodal AI to analyze microexpressions, audio-visual sync, and physiological signals, achieving 95–98% accuracy in some cases. Content credentials (C2PA) offer promising provenance verification but are not yet widely deployed.

However, no tool is foolproof. Human judgment, media literacy, and source verification remain essential, especially as real-time deepfake synthesis becomes possible in video calls and live streams.

4.5. Regulatory and Industry Responses

UNICEF and the UN have called for immediate action to criminalize the creation, possession, and distribution of AI-generated CSAM, and for robust safety-by-design approaches in AI development. Some platforms have begun implementing detection and moderation tools, but coverage and effectiveness remain inconsistent.

5. Algorithmic Manipulation and Addictive Design Targeting Minors

5.1. The Mechanics of Manipulation

Many digital platforms employ algorithmic recommender systems and persuasive design features to maximize user engagement. For children, these features can amplify exposure to harmful content, encourage compulsive use, and exploit developmental vulnerabilities.

Common manipulative features include:

- Infinite scroll and autoplay.

- Streaks, ephemeral content, and push notifications.

- Algorithmic amplification of sensational or distressing content.

- Personalized advertising and in-app purchases (e.g., loot boxes).

5.2. Regulatory Developments

The EU’s Digital Services Act (DSA), the UK’s Online Safety Act (OSA), and similar laws now require platforms to implement age-appropriate design, restrict profiling-based advertising for minors, and limit addictive features by default2021. The European Commission’s 2025 guidelines recommend:

- Setting minors’ accounts to private by default.

- Disabling features that contribute to excessive use.

- Empowering children to control their feeds and block unwanted contacts.

- Prohibiting manipulative commercial practices and hidden advertising.

5.3. Industry and Platform Responses

Some platforms have begun to comply by introducing stricter age verification, enhanced parental controls, and safer default settings. For example, Roblox implemented facial age verification for chat access in 2026, and YouTube rolled out supervised accounts with customizable content filters. However, enforcement and transparency vary, and many platforms continue to prioritize engagement over safety.

5.4. Psychological and Developmental Impacts

Algorithmic manipulation can lead to:

- Increased screen time and digital dependency.

- Exposure to harmful or age-inappropriate content.

- Reinforcement of negative body image, self-harm, or extremist ideologies.

- Impaired sleep, attention, and social development.

6. Privacy Breaches, Data Harvesting, and Commercial Exploitation

6.1. The Commercialization of Childhood

Children’s personal data is a lucrative commodity for advertisers, data brokers, and even criminal actors. Platforms often collect, profile, and monetize children’s data through tracking, targeted advertising, and in-app purchases.

Risks include:

- Unauthorized sharing or sale of personal information.

- Exposure to manipulative advertising and influencer marketing.

- Commercial profiling and behavioral nudging.

- Security risks from data breaches or identity theft.

6.2. Regulatory Responses

The EU, UK, and US have introduced stricter rules for children’s data protection:

- Default privacy settings: Children’s accounts must be private by default.

- Location and biometric data: Must not be accessible or collected without explicit consent.

- Age verification: Must be robust and difficult to bypass.

- Transparency and fairness: Platforms must explain data practices in child-friendly language and offer opt-in choices for personalization.

The EU’s Digital Services Act and GDPR, the UK’s OSA and Age-Appropriate Design Code, and the US COPPA updates all mandate enhanced protections for minors.

6.3. Industry Compliance and Gaps

While some platforms have improved privacy controls, many still fall short. Reports indicate that a significant proportion of platforms popular with children do not publish information on how they address child exploitation or data protection. Enforcement actions and lawsuits are increasing, but gaps remain, especially in cross-border contexts.

7. Exposure to Harmful Content: Pornography, Violence, Self-Harm, and Disinformation

7.1. Types of Harmful Content

Children are routinely exposed to a spectrum of harmful content online, including:

- Pornography and sexual content.

- Violent or graphic imagery.

- Content promoting self-harm, suicide, or eating disorders.

- Disinformation, hate speech, and extremist ideologies.

- Promotion of illegal activities (e.g., drug use, gambling).

A 2025 OECD report found that over 60% of children aged 9–16 had encountered at least one form of harmful content online, with girls more likely to experience unwanted sexual attention and boys more likely to encounter violent or risky behavior content.

7.2. Algorithmic Amplification and Rabbit Holes

Recommender systems can inadvertently amplify exposure to harmful content, especially when children engage with sensational or distressing material. This can lead to “rabbit holes” where children are repeatedly exposed to more extreme or unhealthy content.

7.3. Psychological and Social Impacts

Exposure to harmful content is linked to:

- Short-term distress, anxiety, or upset.

- Normalization of risky or illegal behaviors.

- Reinforcement of negative body image or self-harm.

- Increased risk of radicalization or recruitment by extremist groups.

Vulnerable children—those with limited offline support, pre-existing mental health conditions, or disabilities—are at heightened risk of harm.

7.4. Regulatory and Platform Responses

The DSA, OSA, and similar laws now require platforms to:

- Implement effective age assurance for access to adult content.

- Moderate and promptly remove illegal or harmful material.

- Provide clear reporting and support mechanisms for users.

However, enforcement and coverage remain inconsistent, and children continue to encounter harmful content across platforms.

8. Platform-Specific Risks: Roblox, TikTok, Discord, and the 2026 Roblox Lawsuits

8.1. Roblox: A Case Study in Platform Risk

Roblox, one of the world’s most popular gaming platforms for children, has faced intense scrutiny and legal action over allegations of facilitating child sexual exploitation, grooming, and inadequate safety measures. As of January 2026, over 100 lawsuits have been consolidated against Roblox, with claims that the company prioritized growth over user safety and failed to implement robust age verification or parental controls.

Key issues include:

- Predators posing as minors to access chat and voice features.

- Inadequate moderation of user-generated content.

- Migration of conversations to less monitored platforms (e.g., Discord, Snapchat).

- Exposure to gambling-like features and addictive design.

In response, Roblox has introduced facial age verification for chat access and enhanced parental dashboards, but critics argue that these measures are overdue and insufficient.

8.2. TikTok and Discord

TikTok remains a hotspot for cyberbullying, exposure to harmful trends, and algorithmic manipulation. Discord, often used for off-platform communication, is frequently exploited by predators for grooming and the distribution of illicit material.

8.3. Regulatory and Legal Actions

Multiple US states and international regulators are investigating or suing platforms for failing to protect minors. The outcomes of these cases are likely to set important precedents for industry accountability and platform design.

9. Legal and Regulatory Landscape in 2026: Age Assurance, Online Safety Acts, DSA, COPPA Updates

9.1. Age Assurance and Verification

Age assurance technologies are now central to online child safety. Laws in the US, EU, UK, Australia, and other jurisdictions require platforms to implement robust age verification—moving beyond self-declaration to methods such as facial recognition, digital identity wallets, and mobile network verification.

Australia’s 2025 law bans social media accounts for children under 16, with several countries preparing similar legislation. The EU is piloting an official age verification “mini wallet,” and the US is considering federal standards for age assurance.

9.2. Online Safety Acts and DSA

The UK’s Online Safety Act and the EU’s Digital Services Act impose strict obligations on platforms to:

- Assess and mitigate risks to minors.

- Implement age-appropriate design and privacy by default.

- Restrict profiling-based advertising for children.

- Moderate and remove harmful content promptly.

- Provide transparent reporting and accountability.

Enforcement actions are increasing, with regulators focusing on compliance, risk assessments, and the effectiveness of mitigation measures.

9.3. COPPA and US Legislation

The US Children’s Online Privacy Protection Act (COPPA) has been updated to require stricter age verification, enhanced parental consent, and expanded coverage of AI-generated content. The TAKE IT DOWN Act criminalizes the distribution of non-consensual intimate images, including AI-generated deepfakes, and mandates prompt takedown by platforms.

9.4. International and UN/UNICEF Guidance

The UN, UNICEF, and the International Telecommunication Union (ITU) have issued the first global framework for AI and children’s rights, emphasizing transparency, accountability, and child-centered design throughout the AI lifecycle. The framework urges governments and companies to:

- Integrate children’s rights into all AI-related policies.

- Implement robust safeguards against AI-enabled exploitation.

- Ensure inclusive, bias-free AI and meaningful child participation in policymaking.

10. International Responses and Guidance: UN, UNICEF, and Global Frameworks

10.1. The Joint Statement on Artificial Intelligence and the Rights of the Child

In January 2026, the UN Child Rights Committee, UNICEF, ITU, and 14 UN entities adopted the first international framework dedicated to ensuring that AI is designed, developed, and used in line with children’s rights. The framework sets out 11 priority areas, including:

- Child rights-based AI governance.

- Responsibility, accountability, and transparency.

- Child safety and protection from AI-enabled violence and exploitation.

- Data protection and privacy.

- Non-discrimination, inclusion, and child participation.

- Education, capacity building, and AI literacy.

10.2. Recommendations for States and Industry

The UN urges states to:

- Strengthen AI governance frameworks to uphold children’s rights.

- Require transparency and accountability in AI systems.

- Criminalize AI-generated child sexual abuse material.

- Invest in digital and AI literacy for children, parents, and educators.

Industry is called upon to:

- Implement safety-by-design and robust guardrails in AI products.

- Prevent the circulation of AI-generated CSAM.

- Conduct regular child rights impact assessments.

11. Statistics and Data Sources: Recent Studies, Incident Reports, and Enforcement Actions

11.1. Key Data Points

| Risk Area | Recent Statistics (2024–2026) |

|---|---|

| Cyberbullying | 21% of children aged 10–18 have experienced cyberbullying; 79% on YouTube, 69% on Snapchat |

| Online Grooming | Only 12% of cases reported; 82% of victims experience anxiety/depression; 49% suicidal ideation |

| AI-Generated CSAM | 1,325% increase in reports (2023–2024); 1.2 million children affected by deepfakes in 2025 |

| Child Exploitation | 20.5 million reports to CyberTipline in 2024; 6,344% surge in AI-related cases |

| Platform Lawsuits | 100+ lawsuits against Roblox for child exploitation; 85 cases consolidated in federal court |

| Harmful Content Exposure | 60%+ of children aged 9–16 have encountered harmful content online |

| Addictive Design | 98% of 15-year-olds in OECD countries have smartphones; problematic use rising |

| Privacy Breaches | Majority of platforms lack transparency on child data protection |

These figures highlight the scale and urgency of the online child safety crisis.

12. Psychological and Developmental Impacts of Online Harms

12.1. Mental Health and Wellbeing

Online harms have significant psychological and developmental consequences:

- Increased rates of anxiety, depression, and PTSD.

- Disrupted sleep, attention, and academic performance.

- Social withdrawal, loneliness, and impaired trust in relationships.

- Heightened risk of self-harm and suicide, especially among victims of grooming or sexual extortion.

Children with pre-existing vulnerabilities—such as mental health conditions, disabilities, or limited offline support—are disproportionately affected.

12.2. Gender and Equity Considerations

Girls are more likely to experience unwanted sexual attention, bullying, and body image pressures, while boys are increasingly exposed to violent or risky behavior content. Vulnerable groups, including LGBTQ+ youth and children with disabilities, face compounded risks and distress.

13. Practical Advice for Parents: Controls, Conversations, Digital and AI Literacy

13.1. Building Digital Resilience

Experts agree that keeping children entirely offline is unrealistic and counterproductive. Instead, parents should focus on building digital resilience and open communication.

Key strategies include:

- Set clear ground rules: Use parental controls, time limits, and content filters.

- Engage in regular conversations: Discuss online activities, friends, and potential risks.

- Foster critical thinking: Teach children to question the authenticity of content and recognize manipulative tactics.

- Promote AI literacy: Help children understand the capabilities and limitations of AI tools, including chatbots and generative models.

- Model healthy digital habits: Demonstrate balanced technology use and respectful online behavior.

13.2. Tools and Resources

Platforms like Google Family Link, YouTube Kids, and Gemini for Teens offer customizable controls and educational resources for parents and children. The “Be Internet Awesome” program and AI Literacy Hub provide age-appropriate guides and workshops.

13.3. Recognizing Warning Signs

Parents should watch for:

- Withdrawal, secrecy, or mood changes.

- Increased or secretive device use.

- Unexplained gifts or changes in behavior.

- Reluctance to discuss online activities.

If concerns arise, parents should seek support from schools, specialist organizations, or law enforcement as appropriate.

14. Practical Advice for Educators and Schools: Policies, Reporting, and Digital Citizenship

14.1. School Policies and Safeguarding

Schools play a critical role in online safety. Effective policies should:

- Integrate online safety into the curriculum (e.g., PSHE, computing).

- Provide regular training for staff and students on digital risks and reporting procedures.

- Establish clear protocols for responding to incidents, including cyberbullying, grooming, and exposure to harmful content.

- Collaborate with parents and external agencies as needed.

14.2. Promoting Digital Citizenship

Digital citizenship education should cover:

- Respectful online communication and empathy.

- Recognizing and reporting abuse or harmful content.

- Understanding privacy, data protection, and digital footprints.

- Critical evaluation of information and media literacy.

Peer-to-peer education and collaborative onboarding processes can help set positive online norms.

15. Practical Advice for Policymakers: Regulation, Enforcement, and Cross-Border Cooperation

15.1. Strengthening Legal Frameworks

Policymakers should:

- Mandate robust age assurance and verification for all platforms accessible to minors.

- Criminalize the creation, possession, and distribution of AI-generated CSAM.

- Require transparency, accountability, and regular risk assessments from platforms.

- Fund law enforcement training and cross-border cooperation to address global exploitation networks.

15.2. Supporting Prevention and Education

Invest in:

- Digital and AI literacy programs for children, parents, and educators.

- Accessible reporting and support services for victims.

- Research and data collection to inform evidence-based policy.

International collaboration is essential, given the borderless nature of online harms.

16. Industry Responsibilities and Platform Mitigation Measures

16.1. Safety-by-Design and Proactive Measures

Industry must:

- Implement safety-by-design principles, including robust moderation, age assurance, and privacy-by-default settings.

- Regularly audit and update detection tools for grooming, CSAM, and deepfakes.

- Provide transparent reporting, user controls, and accessible support channels.

- Collaborate with researchers, civil society, and regulators to address emerging threats.

16.2. Accountability and Transparency

Platforms should publish regular transparency reports, engage with independent audits, and involve children and youth in the design and evaluation of safety features.

17. Tools and Technologies for Detection and Prevention

17.1. AI-Powered Detection

State-of-the-art tools now use multimodal AI to detect grooming, deepfakes, and harmful content in real time, analyzing visual, audio, and behavioral cues. Content credentials (C2PA) and provenance verification are emerging as long-term solutions.

17.2. Age Assurance and Verification

Technologies include facial recognition, digital identity wallets, mobile network verification, and bank account linking. The EU Digital Identity Wallet and Australia’s age assurance app are leading examples.

17.3. Parental Controls and Monitoring

Platforms offer customizable controls for screen time, content filtering, and activity monitoring. However, these tools are most effective when combined with open communication and education.

18. Case Studies and Notable Incidents (2024–2026)

- Roblox Lawsuits (2025–2026): Over 100 lawsuits allege that Roblox facilitated child exploitation and failed to implement adequate safeguards, prompting regulatory investigations and platform changes.

- AI-Generated CSAM Surge (2023–2025): Reports of synthetic abuse material rose by 1,325%, with law enforcement and platforms struggling to keep pace.

- Australia’s Social Media Ban (2025): Australia became the first country to ban social media accounts for children under 16, sparking global debate and legislative action in other countries.

- The Com Grooming Network (2026): Coordinated predator groups exploited multiple platforms, leading to school investigations and law enforcement crackdowns in North America and Europe.

19. Equity and Vulnerable Groups: Age, Gender, and Socioeconomic Status

19.1. Differential Risks

- Age: Younger children are less able to recognize and navigate online hazards; teens face increased risks of cyberbullying, grooming, and exposure to harmful content.

- Gender: Girls are more likely to experience sexual harassment, body image pressures, and bullying; boys are increasingly exposed to violent or risky behavior content.

- Socioeconomic Status: Children from lower-income households face higher risks of cyberbullying and exploitation, often due to limited access to digital literacy resources and parental support.

- Disability and Special Needs: Children with disabilities are at heightened risk of abuse, manipulation, and exclusion.

19.2. Inclusive Approaches

Policies and interventions must be tailored to address the unique needs and vulnerabilities of different groups, ensuring equitable access to protection, education, and support.

20. Future Outlook: Predicted Trends and Recommendations for 2027 and Beyond

20.1. Emerging Threats

- Quantum Computing and Extended Reality: New technologies will introduce novel risks and require proactive safety measures.

- Decentralized Platforms: The rise of decentralized and encrypted platforms will challenge detection and enforcement.

- AI Companions and Chatbots: Increasing use of AI companions by children raises concerns about manipulation, privacy, and emotional attachment.

20.2. Recommendations

- Proactive Prevention: Shift from reactive to prevention-based strategies, prioritizing safety-by-design and early intervention.

- Global Collaboration: Strengthen international cooperation on enforcement, research, and standard-setting.

- Continuous Education: Invest in ongoing digital and AI literacy for all stakeholders.

- Child Participation: Involve children and youth in the design, evaluation, and governance of digital platforms and policies.

Building a Safer Digital Future for Children

The online dangers facing children in 2026 are complex, evolving, and deeply intertwined with technological, social, and regulatory trends. While significant progress has been made in recognizing and addressing these risks, the pace of innovation and the scale of the challenge demand sustained, coordinated action from parents, educators, policymakers, industry, and children themselves.

By embracing a holistic, evidence-based, and child-centered approach—grounded in robust regulation, proactive industry measures, and inclusive education—we can empower the next generation to explore, learn, and thrive online while minimizing harm and maximizing opportunity.

Every child deserves a safe and supportive digital environment. The time to act is now.

References (31)

- OECD calls for an ambitious approach to protect and empower children online. https://www.oecd.org/en/about/news/press-releases/2025/05/oecd-calls-for-an-ambitious-approach-to-protect-and-empower-children-online.html

- Child and Youth Safety Online | United Nations. https://www.un.org/en/global-issues/child-and-youth-safety-online

- ‘Deepfake abuse is abuse,’ UNICEF warns | UN News. https://news.un.org/en/story/2026/02/1166886

- Proliferation of sexualised images of youngsters by AI alarming, UNICEF .... https://newsverge.com/2026/02/05/proliferation-of-sexualised-images-of-youngsters-by-ai-alarming-unicef-warns/

- Child Sexual Exploitation and Abuse Online Surges Amid Rapid Tech .... https://www.publichealth.columbia.edu/news/child-sexual-exploitation-abuse-online-surges-amid-rapid-tech-change-new-tool-preventing-abuse-unveiled-path-forward

- Experts unveil practical plan to end technology-facilitated child .... https://www.weprotect.org/news/experts-unveil-practical-plan-to-end-technology-facilitated-child-sexual-abuse-crisis/

- ICT & Online Safety Policy 2025-2026 - knightsbridgeschool.com. https://www.knightsbridgeschool.com/wp-content/uploads/2024/10/ICT-Online-Safety-Policy-2025-2026.pdf

- Europe Unveils New Evidence-Based Guidelines to Advance Safer Platform .... https://kgi.georgetown.edu/research-and-commentary/europe-unveils-new-evidence-based-guidelines-to-advance-safer-platform-design-for-minors/

- The Gender Gap: Understanding and responding to girls' and boys' online .... https://www.internetmatters.org/wp-content/uploads/2026/01/Internet-Matters-gender-gap-research-briefing-jan-2026.pdf

- Online Grooming Statistics: Market Data Report 2026. https://worldmetrics.org/online-grooming-statistics/

- Online grooming - WeProtect Global Alliance. https://www.weprotect.org/thematic/online-grooming/

- Roblox Child Exploitation Lawsuit | February 2026 Updates. https://www.consumernotice.org/legal/roblox-lawsuit/

- Parents ask Roblox board to stop attempts to force lawsuits out of the .... https://abcnews.go.com/US/hundreds-parents-roblox-board-stop-attempts-force-lawsuits/story?id=129739569

- Spike in online crimes against children a “wake-up call”. https://www.missingkids.org/blog/2025/spike-in-online-crimes-against-children-a-wake-up-call

- 20 Million Reports: The Online Child Exploitation Crisis. https://www.dataforadifference.com/ignite/20-million-reports-the-online-child-exploitation-crisis

- Synthetic Media Detection: Tools and Methods for Spotting Deepfakes in .... https://stateofsurveillance.org/articles/technical/synthetic-media-detection-deepfake-tools-2026/

- 7 Best Deepfake Detector Tools & Techniques (January 2026). https://www.unite.ai/best-deepfake-detector-tools-and-techniques/

- Joint Statement on Artificial Intelligence and the Rights of the Child. https://www.ohchr.org/sites/default/files/statements/2026-01-29-joint-stm-itu-crc-en.pdf

- EU Tightens Rules on Children’s Data Protection in 2025. https://www.gdprregister.eu/news/eu-tightens-childrens-data-protection/

- Commission publishes guidelines on the protection of minors. https://digital-strategy.ec.europa.eu/en/node/13971/printable/pdf

- Protecting children online: What to expect in 2026 | ReedSmith. https://www.reedsmith.com/our-insights/blogs/technology-law-dispatch/102mela/protecting-children-online-what-to-expect-in-2026/

- Age assurance regulation for social media to go global in 2026. https://www.biometricupdate.com/202601/age-assurance-regulation-for-social-media-to-go-global-in-2026

- 2026 Year in Preview: Global Minors’ Privacy and Online Safety .... https://www.wsgrdataadvisor.com/2026/01/2026-year-in-preview-global-minors-privacy-and-online-safety-predictions/

- Child rights and AI: CRC adopts first global framework with partners. https://www.ohchr.org/en/statements/2026/02/child-rights-and-ai-crc-adopts-first-global-framework-partners

- High-level launch and signing ceremony of the Joint Statement on ... - ITU. https://www.itu.int/en/ITU-D/Cybersecurity/Pages/COP/2026/High-level-launch-and-signing-ceremony-of-the-Joint-Statement-on-Artificial-Intelligence-and-the-Rights-of-the-Child.aspx

- Gemini AI for Kids: The Complete Parent’s Guide to Safety, Learning .... https://techtich.com/gemini-ai-for-kids-a-parents-guide-to-safety-educational-benefits-smart-controls/

- Guide your child's Gemini Apps experience - Google Help. https://support.google.com/families/answer/16109150?hl=en

- Section 4: School / Service Child Protection Policy. https://adderleyprimary.co.uk/wp-content/uploads/2025/10/Online-Safety-Policy-2025-2026.pdf

- Minimising the risks children and young people face online. https://commission.europa.eu/news-and-media/news/minimising-risks-children-and-young-people-face-online-2025-07-14_en

- Thorn and WeProtect Global Alliance Release Report on New and Emerging .... https://www.thorn.org/press-releases/thorn-and-weprotect-global-alliance-release-report-on-new-and-emerging-technologies-impacting-online-child-safety/